Artificial Intelligence

Category: Learning Levels

Video auto-dubbing using Amazon Translate, Amazon Bedrock, and Amazon Polly

This post is co-written with MagellanTV and Mission Cloud. Video dubbing, or content localization, is the process of replacing the original spoken language in a video with another language while synchronizing audio and video. Video dubbing has emerged as a key tool in breaking down linguistic barriers, enhancing viewer engagement, and expanding market reach. However, […]

Improve RAG accuracy with fine-tuned embedding models on Amazon SageMaker

This post demonstrates how to use Amazon SageMaker to fine tune a Sentence Transformer embedding model and deploy it with an Amazon SageMaker Endpoint. The code from this post and more examples are available in the GitHub repo.

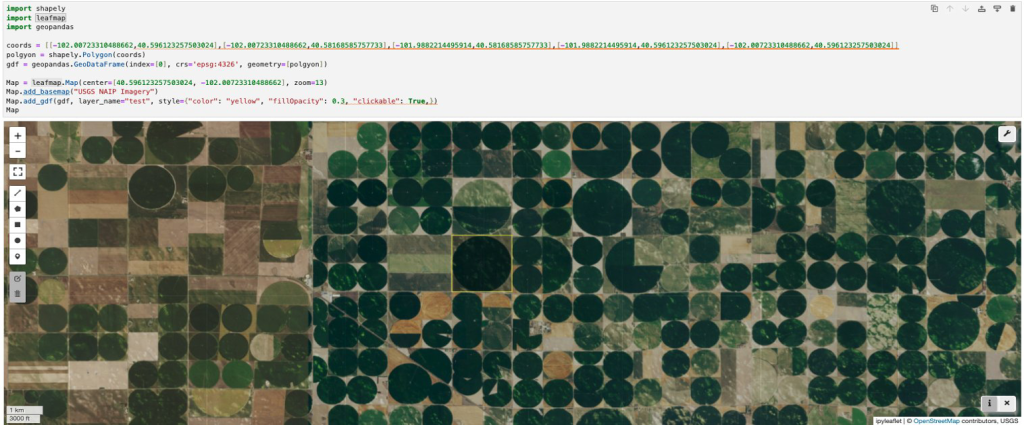

Create custom images for geospatial analysis with Amazon SageMaker Distribution in Amazon SageMaker Studio

This post shows you how to extend Amazon SageMaker Distribution with additional dependencies to create a custom container image tailored for geospatial analysis. Although the example in this post focuses on geospatial data science, the methodology presented can be applied to any kind of custom image based on SageMaker Distribution.

Automating model customization in Amazon Bedrock with AWS Step Functions workflow

Large language models have become indispensable in generating intelligent and nuanced responses across a wide variety of business use cases. However, enterprises often have unique data and use cases that require customizing large language models beyond their out-of-the-box capabilities. Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) […]

Fine-tune Anthropic’s Claude 3 Haiku in Amazon Bedrock to boost model accuracy and quality

Frontier large language models (LLMs) like Anthropic Claude on Amazon Bedrock are trained on vast amounts of data, allowing Anthropic Claude to understand and generate human-like text. Fine-tuning Anthropic Claude 3 Haiku on proprietary datasets can provide optimal performance on specific domains or tasks. The fine-tuning as a deep level of customization represents a key […]

Generate unique images by fine-tuning Stable Diffusion XL with Amazon SageMaker

Stable Diffusion XL by Stability AI is a high-quality text-to-image deep learning model that allows you to generate professional-looking images in various styles. Managed versions of Stable Diffusion XL are already available to you on Amazon SageMaker JumpStart (see Use Stable Diffusion XL with Amazon SageMaker JumpStart in Amazon SageMaker Studio) and Amazon Bedrock (see […]

Build your multilingual personal calendar assistant with Amazon Bedrock and AWS Step Functions

This post shows you how to apply AWS services such as Amazon Bedrock, AWS Step Functions, and Amazon Simple Email Service (Amazon SES) to build a fully-automated multilingual calendar artificial intelligence (AI) assistant. It understands the incoming messages, translates them to the preferred language, and automatically sets up calendar reminders.

Medical content creation in the age of generative AI

Generative AI and transformer-based large language models (LLMs) have been in the top headlines recently. These models demonstrate impressive performance in question answering, text summarization, code, and text generation. Today, LLMs are being used in real settings by companies, including the heavily-regulated healthcare and life sciences industry (HCLS). The use cases can range from medical […]

Introducing guardrails in Amazon Bedrock Knowledge Bases

Amazon Bedrock Knowledge Bases is a fully managed capability that helps you securely connect foundation models (FMs) in Amazon Bedrock to your company data using Retrieval Augmented Generation (RAG). This feature streamlines the entire RAG workflow, from ingestion to retrieval and prompt augmentation, eliminating the need for custom data source integrations and data flow management. […]

Accelerated PyTorch inference with torch.compile on AWS Graviton processors

Originally PyTorch used an eager mode where each PyTorch operation that forms the model is run independently as soon as it’s reached. PyTorch 2.0 introduced torch.compile to speed up PyTorch code over the default eager mode. In contrast to eager mode, the torch.compile pre-compiles the entire model into a single graph in a manner that’s optimal for […]